[ad_1]

QA RAG with Self Analysis II

For this variation, we make a change to the analysis process. Along with the question-answer pair, we additionally move the retrieved context to the evaluator LLM.

To perform this, we add a further itemgetter operate within the second RunnableParallel to gather the context string and move it to the brand new qa_eval_prompt_with_context immediate template.

rag_chain = (

RunnableParallel(context = retriever | format_docs, query = RunnablePassthrough() ) |

RunnableParallel(reply= qa_prompt | llm | retrieve_answer, query = itemgetter("query"), context = itemgetter("context") ) |

qa_eval_prompt_with_context |

llm_selfeval |

json_parser

)

Implementation Flowchart :

One of many widespread ache factors with utilizing a series implementation like LCEL is the problem in accessing the intermediate variables, which is essential for debugging pipelines. We have a look at few choices the place we are able to nonetheless entry any intermediate variables we have an interest utilizing manipulations of the LCEL

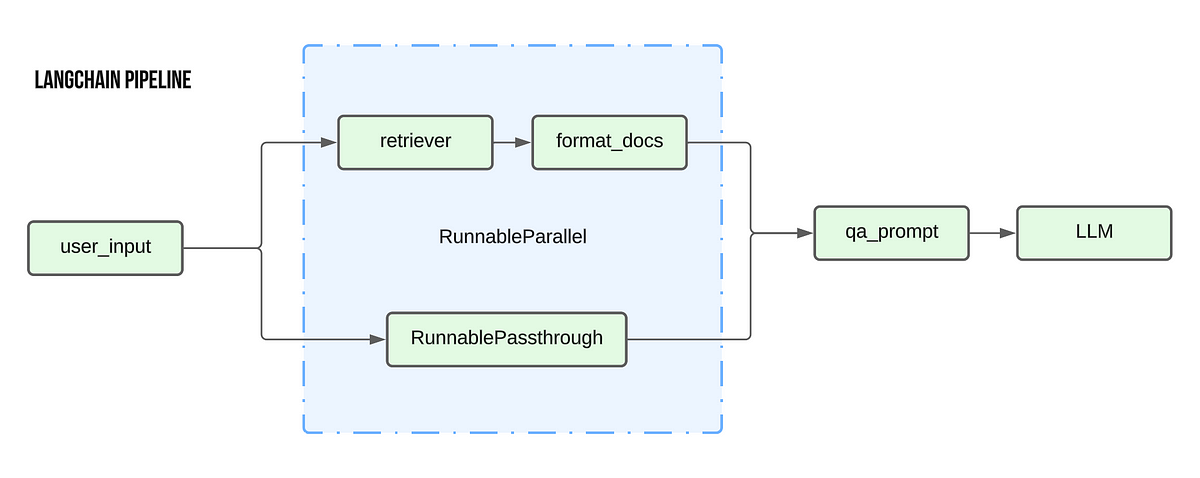

Utilizing RunnableParallel to hold ahead intermediate outputs

As we noticed earlier, RunnableParallel permits us to hold a number of arguments ahead to the subsequent step within the chain. So we use this means of RunnableParallel to hold ahead the required intermediate values all the best way until the top.

Within the under instance, we modify the unique self eval RAG chain to output the retrieved context textual content together with the ultimate self analysis output. The first change is that we add a RunnableParallel object to each step of the method to hold ahead the context variable.

Moreover, we additionally use the itemgetter operate to obviously specify the inputs for the next steps. For instance, for the final two RunnableParallel objects, we use itemgetter(‘enter’) to make sure that solely the enter argument from the earlier step is handed on to the LLM/ Json parser objects.

rag_chain = (

RunnableParallel(context = retriever | format_docs, query = RunnablePassthrough() ) |

RunnableParallel(reply= qa_prompt | llm | retrieve_answer, query = itemgetter("query"), context = itemgetter("context") ) |

RunnableParallel(enter = qa_eval_prompt, context = itemgetter("context")) |

RunnableParallel(enter = itemgetter("enter") | llm_selfeval , context = itemgetter("context") ) |

RunnableParallel(enter = itemgetter("enter") | json_parser, context = itemgetter("context") )

)

The output from this chain seems like the next :

A extra concise variation:

rag_chain = (

RunnableParallel(context = retriever | format_docs, query = RunnablePassthrough() ) |

RunnableParallel(reply= qa_prompt | llm | retrieve_answer, query = itemgetter("query"), context = itemgetter("context") ) |

RunnableParallel(enter = qa_eval_prompt | llm_selfeval | json_parser, context = itemgetter("context"))

)

Utilizing International variables to save lots of intermediate steps

This methodology primarily makes use of the precept of a logger. We introduce a brand new operate that saves its enter to a worldwide variable, thus permitting us entry to the intermediate variable by the worldwide variable

world contextdef save_context(x):

world context

context = x

return x

rag_chain = (

RunnableParallel(context = retriever | format_docs | save_context, query = RunnablePassthrough() ) |

RunnableParallel(reply= qa_prompt | llm | retrieve_answer, query = itemgetter("query") ) |

qa_eval_prompt |

llm_selfeval |

json_parser

)

Right here we outline a worldwide variable referred to as context and a operate referred to as save_context that saves its enter worth to the worldwide context variable earlier than returning the identical enter. Within the chain, we add the save_context operate because the final step of the context retrieval step.

This feature permits you to entry any intermediate steps with out making main adjustments to the chain.

Utilizing callbacks

Attaching callbacks to your chain is one other widespread methodology used for logging intermediate variable values. Theres rather a lot to cowl on the subject of callbacks in LangChain, so I shall be masking this intimately in a distinct submit.

[ad_2]